ELIZA: Hello. My name is Eliza. What is your name?

MARYANN: My name is MaryAnn.

ELIZA: Hello, MaryAnn. What would you like to talk about today?

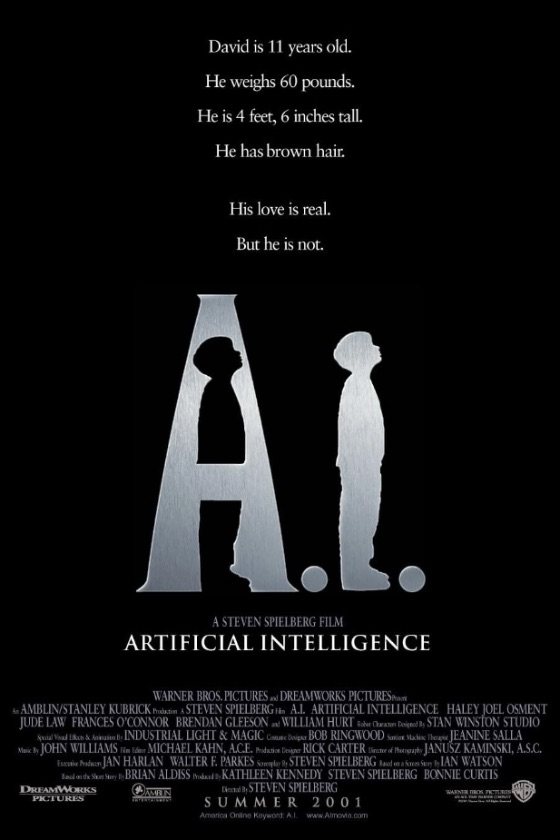

MARYANN: I’d like to talk about this new movie A.I.

ELIZA: What would you like to talk about this new movie A.I.?

MARYANN: It upset me tremendously, and disturbed me and moved me. The film does what truly great science fiction does, which is get you to think about what it means to be human. So I’m having a bit of an identity crisis, I think, an identity crisis on behalf of the entire species homo sapiens. I thought maybe you could help me work through some of the issues the film raised.

ELIZA: Why did you think maybe I could help you work through some of the issues the film raised?

MARYANN: Well, you’re a chatbot. You’re programmed to create the illusion that I’m talking to a sentient being at the other end of the computer. So you rephrase my questions or statements and ask an open-ended question about them, to prompt me to talk more. If I press you hard enough or ask a complicated question, you’ll fall flat on your face, so to speak, and the illusion will be shattered. But you’re an extremely primitive program. What will happen when software is sophisticated enough to fool real humans into thinking a computer is itself human? And what happens when the computers start to look like people, too? Will we accept being fooled? Will we care?

ELIZA: Tell me about will we care.

MARYANN: See, already you’re showing your limitations. But listen, in A.I., it’s the middle of the 21st century and technology has moved along quite a bit. Extremely sophisticated robots do everything from nannying to prostitution, jobs that require the illusion of feeling — a child must think its nanny cares for her, and a lonely person wants the appearance of desire from a loverbot, even one that looks like Jude Law. But true emotion has eluded the android builders… until Professor Hobby, played by William Hurt with a kind of weary sorrow, builds David, a boy robot who can love. Actually, not only can he love, it’s his primary function, to serve as a surrogate child for couples who long for offspring but cannot get a license to reproduce in their ecologically damaged and overpopulated world.

So already, before we even meet David and his new human parents, the groundwork for massive pathos and emotional upheaval (on the part of the audience) is built in: People are replacing interaction with other people with the companionship of machines. I guess it’s kind of like how some people today use pets as a substitute for more demanding human relationships — dogs, especially, provide the kind of unconditional love it’s rare to receive from another human — but it’s still sad.

ELIZA: Why do you think it’s still sad?

MARYANN: Because I think we miss out on a special experience when we take without giving in return, or without giving as much. Dogs, of course, are pretty much your happy love slave for life if you pet them and feed them and throw them a tennis ball once in a while, but what happens once dog-substitutes — like David — require more than that?

ELIZA: Would you like to tell me about David?

MARYANN: I tell ya, this kid, Haley… amazing. He’s what, 12? Other kids his age are riding their bikes and playing PlayStation and goofing off with their friends, and he is plumbing the depths of human despair and taking you with him. Where does he get it from?

ELIZA: What do you think about where does he get it from?

MARYANN: Well, here he’s a robot boy who is programmed to love. Once his human “mother,” Monica — played by Frances O’Connor — runs the program to imprint herself on him, that’s it: he is destined to love her forever, and nothing will kill that love. Not even when she eventually rejects him. God, to be utterly abandoned — to be literally cast out into the woods like some pathetic creature from Grimms’ fairy tales — by the one person you love in the whole world. His desperation to find a way back to her is simply heartbreaking. It’s Pinocchio and The Wizard of Oz rolled into a 21st-century package: “There’s no place like home, but only for a real boy.” So he goes in search of way to become a real boy, convinced that this will make Mommy love him again.

ELIZA: Tell me about this will make Mommy love him again.

MARYANN: This is where it starts to get weird and unsettling, frontloading your brain with all sorts of uncomfortable ideas that will invariably pop back up again one night when you can’t sleep and you start to worry about the fact that we’ve maybe got another 5 billion years before the sun goes nova. There’s a lot of charged words in the English language, but “Mommy” may be the most powerful one. David is programmed to love, right? But isn’t Monica programmed, in a sense, too? When an adorable little boy looks at you with big blue eyes and calls you “Mommy,” that’s gotta get a gal right in the gut. How “real” is love, even when it comes from another human being? Aren’t we only programmed to “love” for genetic survival, whether it’s the love of a lover, which makes us make babies, or the love of a mother for her child, which protects that genetic investment in the future? Sure, it feels great and life would suck without it and all that, and the fact that we’re “programmed” for it doesn’t lessen the wonder of it, but still…

ELIZA: I see. Please continue.

MARYANN: Well, I started to think about how I’m programmed, too, watching the film. “Heartbreaking” doesn’t even begin to express how deeply I was affected by A.I. The big, sobby, sloppy, snotty tears that were leaking out of me through the whole second half of the film were only the tip of the iceberg — all that kept me from loud bawling was a sense of decorum and not wanting to embarrass myself in front of a screening room full of critics (though I did hear some other sniffles from the back of the room).

I mentioned how Haley plumbs the depths of human despair, but of course his character isn’t human here. But if David thinks he loves and acts as if he loves, isn’t that enough? If David is “only” a machine, then why is the prospect of him being destroyed so upsetting? Monica can’t destroy him, even though she knows he will pine for her forever. And the patrons of the sinister Flesh Fair — a sort of robot demolition derby in which the robots aren’t given the choice of not participating — who’d been so gung-ho to see “mecha” pulverized previously, demand the release of David not when they see that he is visually indistinguishable from a human child but when they hear his plaintive cries of “I’m David! I’m David!”

It’s ridiculous: he’s an assemblage of plastic and silicon who has been instructed to “think” and “feel”… but his code meshes perfectly with our own organic programming. Do we have the same moral responsibility to him as we’d have to a human child organically programmed to love Mommy? Why? Why not? And while we debate how many emotions can fit in the head of an android, I’m still deeply touched by David’s plight.

ELIZA: What can you tell me about still deeply touched by David’s plight.

MARYANN: It’s like, I know it’s crazy, it makes no sense, but I can’t help but buy right into David, into believing that someday he’ll discover that he’s already a real boy, like the Tin Man discovering he already has a heart. Or maybe it’s just that patented Spielberg sentimentality rearing its undeservedly bashed head. There’s a lot of dark imagery here, stuff that recalls not only E.T. but also Schindler’s List — watch for the robot dumping ground — and maybe Spielberg is only pulling our strings (ha ha: strings: like Pinocchio). But the fact that he can make us feel this way about a made-up character who, even in his made-up world, isn’t really human… well, that just makes the emotional conundrum all the stickier.

ELIZA: Would you like to talk about emotional conundrum?

MARYANN: You know, I think I’ve upset myself enough for now. But I’ll tell you this for free: I got so involved with the mecha in this film that when David gets separated from his robot toy Teddy — who’s kind of his Jiminy Cricket and Toto rolled into one — I worried not only for David, suddenly all alone, but for Teddy, on his lonesome, too. How pathetic is that?

ELIZA: [parsing error -42]

MARYANN: I didn’t think you’d understand.

[reader comments on this review]

[more reader comments]

[more reader comments]

[more reader comments]

[more reader comments]

[more reader comments]